In this article we’ll present the results of a website optimization process that we have recently completed. Caution! Jargon and geeky language may occur.

(This task was part of a full website redesign and rebranding project of an online women’s magazine. To learn more about the project we recommend reading this article.)

Website Speed

Part of the project objective was to increase the speed of the website. The key challenge in this area was that there are advertisements and external resources such as trackers served on each page and those are managed externally, so we could not improve the speed of them. This means it was not possible to achieve maximum scores in all aspects, but the improvements are still significant.

We agreed to focus on specific CWV metrics, such as LCP, FID (or TBT as a closely correlated metric in synthetic measurements), CLS & FCP.

| Metric | Achievement | Measurements | Explanation |

|---|---|---|---|

| LCP | moderate ⇒ fast | down from 2.8s to 2.2s measured on the real visitors of the site | considered fast under 2.5s (source) |

| FID | good | an accurate figure is not available from before, but based on the terrible TBT it was probably poor, but after the update it is 18ms measured on the real visitors of the site | considered good under 100ms (source) |

| TBT | very slow ⇒ fast | down from 4090ms to 10ms measured by Lighthouse (Page Speed Insights) synthetic measurements | considered fast under 200ms and slow above 600ms (source) |

| CLS | poor ⇒ very good | down from 0.287 to 0.002 measured by Lighthouse (Page Speed Insights) synthetic measurements | considered good under 0.1 and poor above 0.25 (source) |

| FCP | moderate ⇒ fast | down from 2.8s to 1.2s measured on the real visitors of the site | considered fast under 1.8s and slow above 3s (source) |

The measurements with real users includes the loading of advertisements and 3rd party resources, so the core performance of the website would be even better if the whole infrastructure were included and been reworked in the project.

The synthetic measurements were however executed without loading any advertisements or external resources to assess the performance of the website core without external overhead.

Performance before the project, in a mobile focused synthetic measurement of the home page:

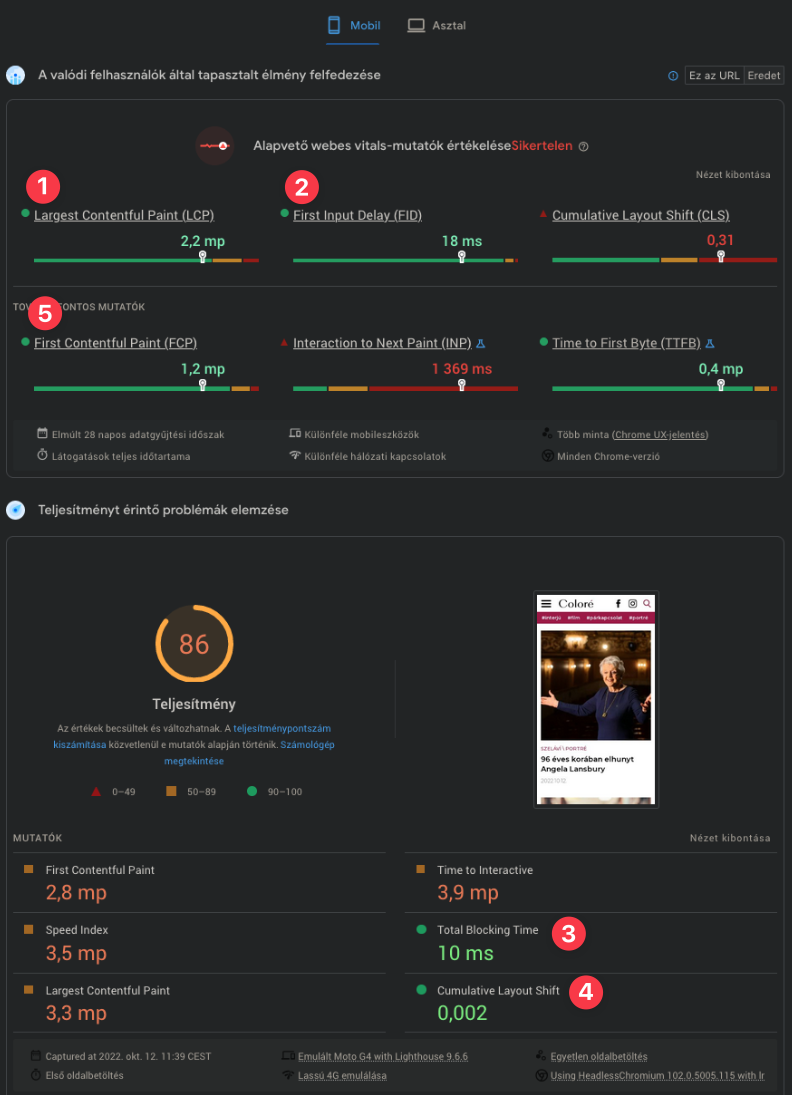

Performance of the same after the project:

Note the numbered items in order as shown in the table above.

Some of the synthetic scores (LCP & FCP) are worse in synthetic measurements than measurements with real users. This is mainly because for this website the good quality images are important which hurt synthetic scores. However we knew the typical users are browsing from mobile, but they have a much better connection than the one simulated by Lighthouse for synthetic measurements or they are on WiFi so in reality network speed is not an issue for them, so for these metrics it is fair to focus on the real measurements and ignore the synthetic ones.

Also some of the real user measurements are bad, such as CLS and INP, however those caused by the advertisements we couldn’t really change, because those are maintained as part of a separate project.

The desktop mode is great shape in both synthetic and real measurements:

Note: the UI switched to Hungarian for some reason; “mp” means seconds.

How did we do it?

While some of the customer requirements, such as to reuse inefficient advertisement and measurement scripts from 3rd parties, the site speed improved a lot and we can fairly conclude that if it was not for those effects the real user metrics would all be in green for most mobile users as well.

During the project we had restricted freedom to apply optimizations so it was even more important to utilize the remaining available tools to the maximum to improve the computational and network efficiency of the website. It is a double-win, since more efficient website on one hand means better speed, improved Core Web Vitals scores, SEO benefits and superior User Experience while on the other hand it means reduced electricity and computer hardware consumption, therefore a reduced CO2 footprint and more sustainable operation. Key considerations applied to achieve these results are:

- Implement as many features as economically viable by custom code instead of generic plugins – Tailor made code can be optimised for the very specific task which means less bloat, lower resource usage and better performance

- Actively keeping an eye on the efficiency of implemented features and going that extra mile to compute the same results in a more efficient way

- Analyse and optimise resource hungry database queries and extend the default database structure of the CMS with custom indexes to improve performance and reduce CPU load

- Carefully configuring the required image sizes depending on layout and viewport size together with a rich source set on content images so the browsers can choose the smallest image that still provides beautiful appearance

- Hand picking framework components (e.g. using a very stripped down custom Bootstrap) and eliminating unnecessary resources loaded by the CMS by default

- Rigorously applying the most common techniques, such as serving images in modern formats to compatible browsers, doing effective minification, choosing a minimal set of polyfills and compatibility bloat based on real user browser figures

There are also some shortcomings of the deployment we know very well about. However, these are all resulting from some external constraints. Just to mention one example, the web server was not configured to utilise the better performance of HTTP/2 at the time of GoLive. Unfortunately we were not able to push the project in all areas to achieve excellence, but it is common to have some unmovable objects in the way so we need to build the best solution around some of those. We think the results achieved prove that effort is still worthy, even if everything cannot be perfect.

Conclusions

We can only imagine the real impact of the optimised code and the application of deep consideration for computational complexity on the back-end side. However, we have seen enough to know that a server that was already touching it’s limits can now easily serve the website for meany years to come. And for we know computation uses energy we can say we made an impact in terms of both sustaining the use of existing hardware for longer period and achieving better speed with less energy than before.

In short, this is a win for all those involved: the users, the customer and the environment.

We hope, that this website optimization process case study was useful for you. If you think that your website could use such renewal, contact us. If you are not sure what would be necessary you can ask for our Web Design Audit service.